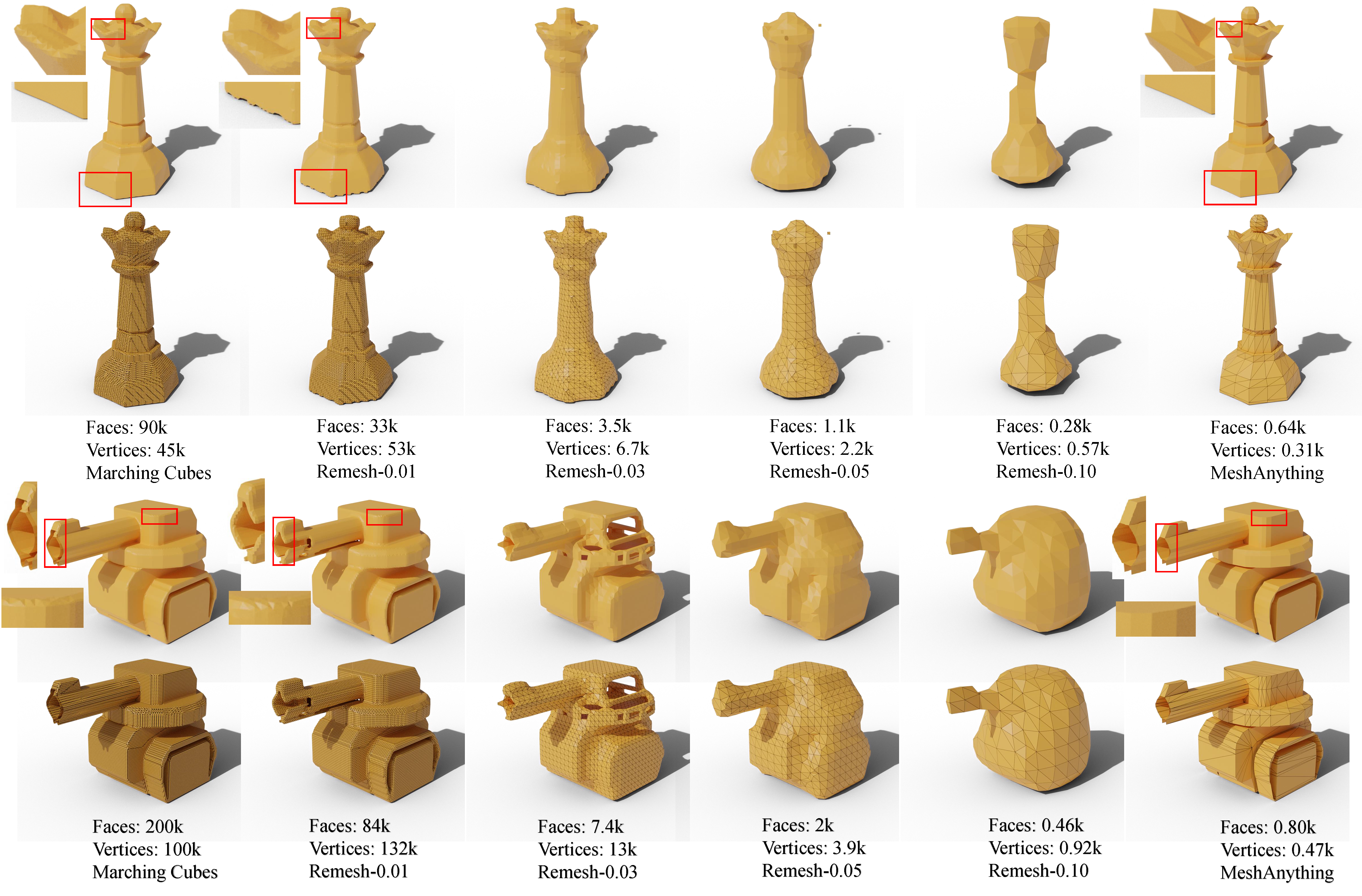

MeshAnything generates meshes with hundreds of times fewer faces, significantly improving storage, rendering, and simulation efficiencies, while achieving precision comparable to previous methods.

Recently, 3D assets created via reconstruction and generation have matched the quality of manually crafted assets, highlighting their potential for replacement. However, this potential is largely unrealized because these assets always need to be converted to meshes for 3D industry applications, and the meshes produced by current mesh extraction methods are significantly inferior to Artist-Created Meshes (AMs), i.e., meshes created by human artists. Specifically, current mesh extraction methods rely on dense faces and ignore geometric features, leading to inefficiencies, complicated post-processing, and lower representation quality.

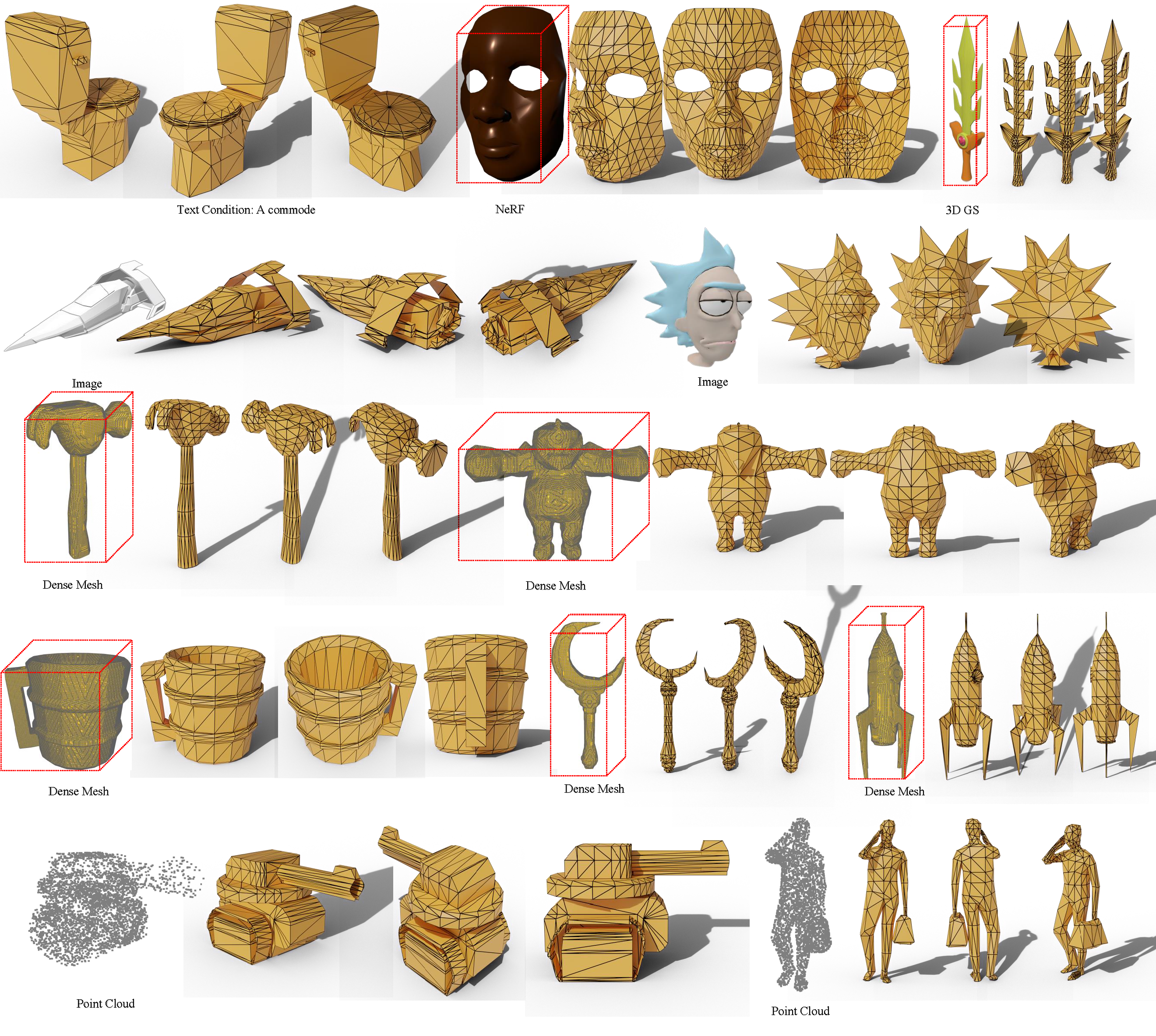

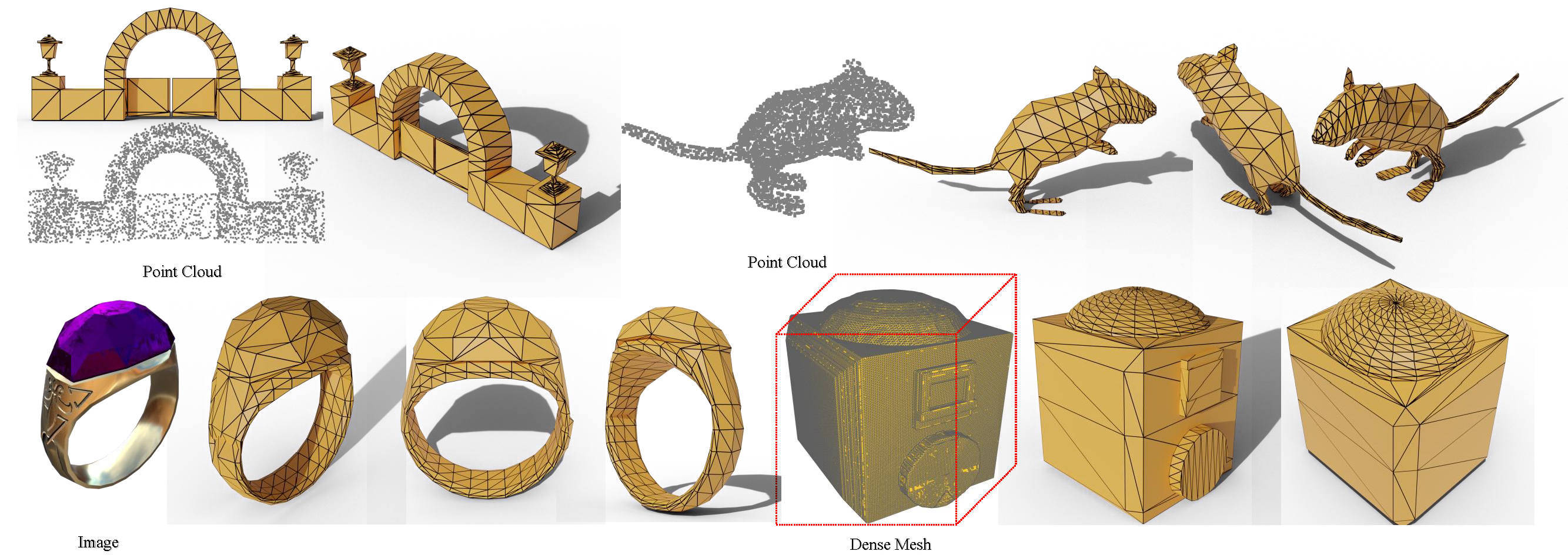

To address these issues, we introduce MeshAnything, a model that treats mesh extraction as a generation problem, producing AMs aligned with specified shapes. By converting 3D assets in any 3D representation into AMs, MeshAnything can be integrated with various 3D asset production methods, thereby enhancing their application across the 3D industry.

The architecture of MeshAnything comprises a VQ-VAE and a shape-conditioned decoder-only transformer. We first learn a mesh vocabulary using the VQ-VAE, then train the shape-conditioned decoder-only transformer on this vocabulary for shape-conditioned autoregressive mesh generation. Our extensive experiments show that our method generates AMs with hundreds of times fewer faces, significantly improving storage, rendering, and simulation efficiencies, while achieving precision comparable to previous methods.

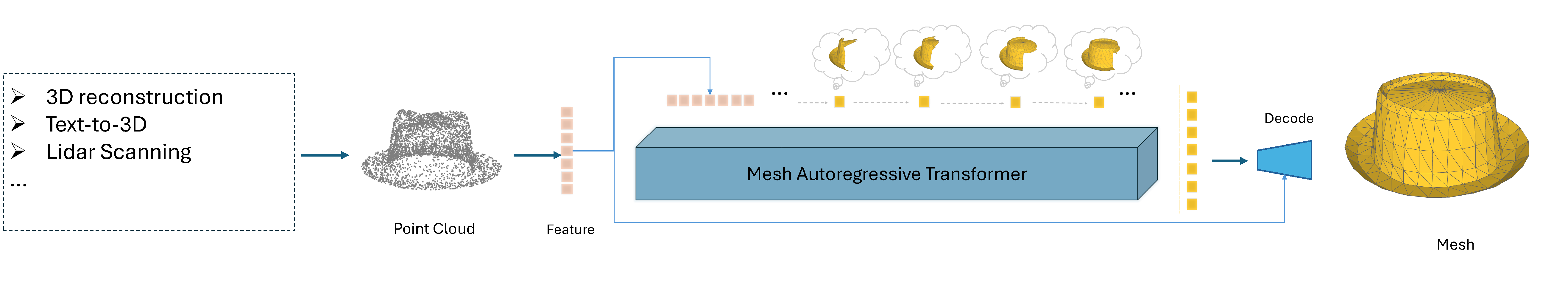

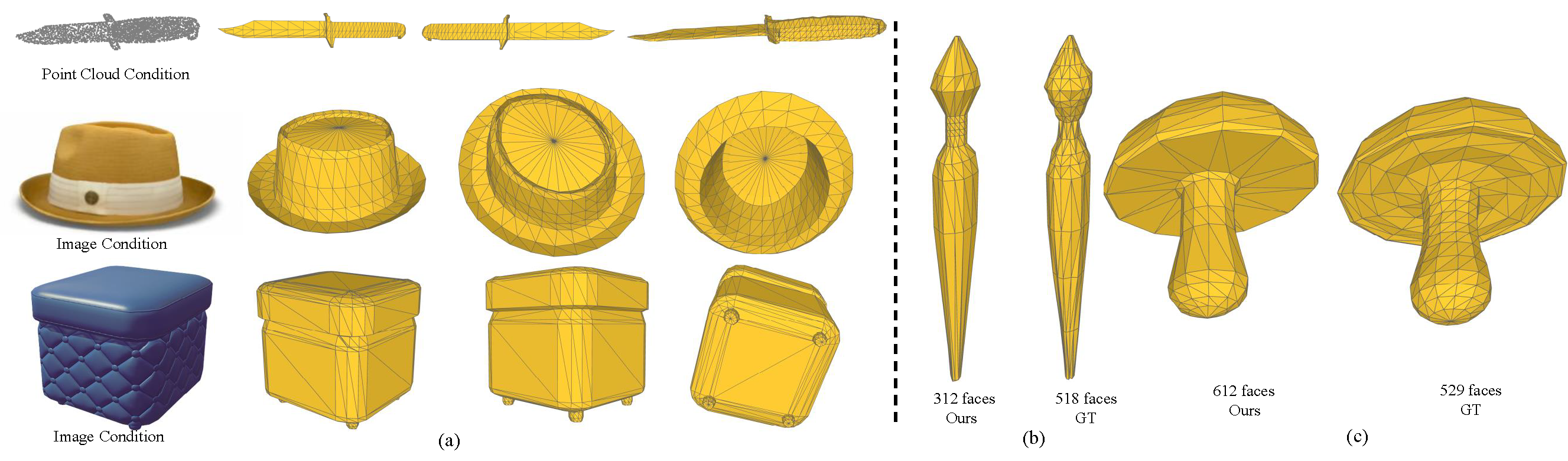

MeshAnything is an autoregressive transformer capable of generating Artist-Created Meshes that adhere to given 3D shapes. We sample point clouds from given 3D assets, encode them into features, and inject them into the decoder-only transformer to achieve shape-conditional mesh generation.

Compared to methods like MeshGPT that directly generate Artist-Created Meshes, our approach avoids learning complex 3D shape distributions. Instead, it focuses on efficiently constructing shapes through optimized topology, significantly reducing the training burden and enhancing scalability.

By integrating with various 3D asset production methods, our approach achieves highly controllable Artist-Created Mesh generation. Besides, we compare our reseults with ground truth in (b) and (c). In (b), MeshAnything generates meshes with better topology and fewer faces than the ground truth. In (c), we produce meshes with a completely different topology while achieving a similar shape, proving that our method does not simply overfit but understands how to construct meshes using efficient topology.

@misc{chen2024meshanything,

title={MeshAnything: Artist-Created Mesh Generation with Autoregressive Transformers},

author={Yiwen Chen and Tong He and Di Huang and Weicai Ye and Sijin Chen and Jiaxiang Tang and Xin Chen and Zhongang Cai and Lei Yang and Gang Yu and Guosheng Lin and Chi Zhang},

year={2024},

eprint={2406.10163},

archivePrefix={arXiv},

primaryClass={id='cs.CV' full_name='Computer Vision and Pattern Recognition' is_active=True alt_name=None in_archive='cs' is_general=False description='Covers image processing, computer vision, pattern recognition, and scene understanding. Roughly includes material in ACM Subject Classes I.2.10, I.4, and I.5.'}

}